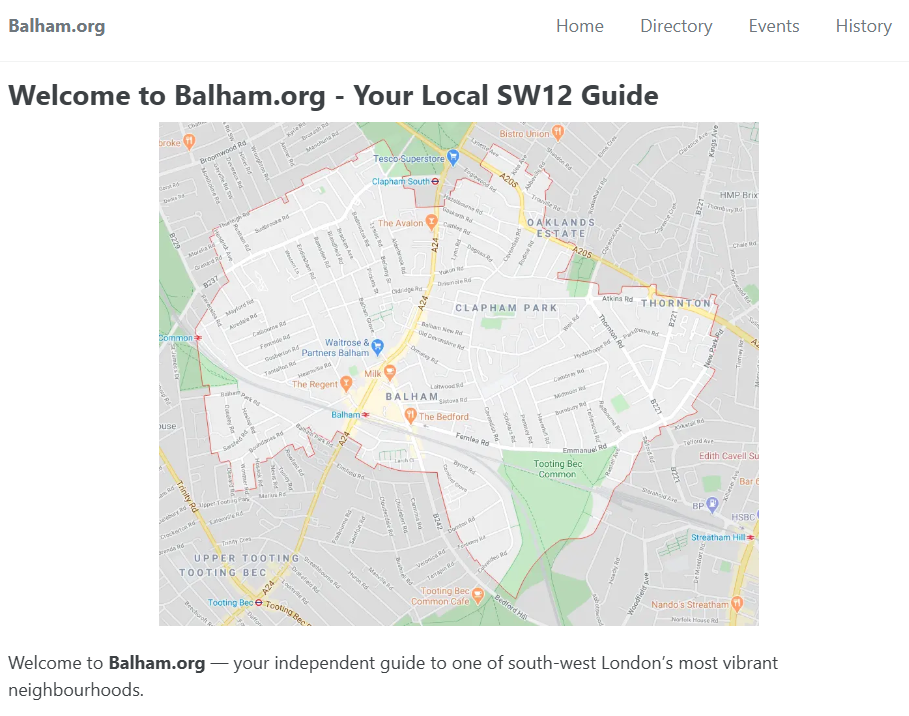

A few days ago, I looked at an unused domain I owned — balham.org — and thought: “There must be a way to make this useful… and maybe even make it pay for itself.” So I set myself a challenge: one day to build something genuinely useful. A site that served a real audience (people… Continue reading Building a website in a day — with help from ChatGPT

Category: web

How I build websites in 2025

I built and launched a new website yesterday. It wasn’t what I planned to do, but the idea popped into my head while I was drinking my morning coffee on Clapham Common and it seemed to be the kind of thing I could complete in a day – so I decided to put my original… Continue reading How I build websites in 2025

Checking Copyright

There’s a lot of material out there on the internet. And the nature of the internet means that it’s easy to reuse that material without paying any attention to copyright. If my browser can display an image, then I can save that image to my local disk and then, perhaps, use it on my own… Continue reading Checking Copyright

Redirecting RSS

I’ve harped on about this before, but I firmly believe that when you publish a URL on the web then it should be permanent. Of course you might want to change the way that your site is set up at some point in the future, but when you do that you should do everything you… Continue reading Redirecting RSS

Credit Where Credit Is Due

I spend a lot of time here complaining about broken web sites, so it’s nice to be able to praise something that worked better than expected. And I’m slightly surprised to be able to report an impressive experience with a UK government web site. One thing that I found whilst sorting through my study over… Continue reading Credit Where Credit Is Due

Google Calendar Spam

Is anyone else getting Google Calendar spam? About half a dozen times in the last month I’ve got an SMS message telling me that I’ve received an invitation to an event on my Google Calendar and when I check the calendar it’s actually some kind of 419 spam. I suppose that it was inevitable that… Continue reading Google Calendar Spam

Human Dinosaurs

Having just been saying how much I like the new Guardian URL scheme, it was interesting to see the URL for this article from today’s paper. The article is about some early hominan[1] remains that have been found in northern Spain. The URL is http://www.guardian.co.uk/science/2008/mar/27/archaeology.dinosaurs I can obviously see why it’s in the science section.… Continue reading Human Dinosaurs

Guardian URLs

I’m a great believer in the idea that URLs should be permanent. When I publish something on the web then (hopefully) people link to it, and it would be nice to think that those links still work in five, ten or fifty years time. A few months ago I changed the URL scheme for davblog,… Continue reading Guardian URLs

Redesigning

It’s nine years since I registered the domain dave.org.uk and set up a web site there. And I’ve never really known what to do with it. Since I started blogging, it’s seemed even less useful. The blog front page was where all the interesting stuff happened. The main page just contained links to a few… Continue reading Redesigning

Google Sees All

An interesting story in today’s Telegraph. Apparently the photo that proved that John and Anne Darwin were together in Panama was found by someone searching for “John Anne Panama” in Google. I’ve just tried it and it still works. Searching for “John Anne Panama” in Google image search brings back a picture of them from… Continue reading Google Sees All